Building the Discovery Layer for a Blockchain Ecosystem

Crypto projects communicate through Discord, Telegram, and Twitter. Discord requires login — Google can't index it. Telegram is a closed messaging platform — invisible to search entirely. Twitter is more nuanced: content is technically public, but the platform actively discourages crawling, keeps tightening API access, and is building its own AI silo. If you live on Twitter it's great for engagement. But tweets scroll away in hours, and the platform is increasingly designed to keep attention inside rather than let it flow outward to search engines and AI systems.

A project can have tens of thousands of users across these channels and still not exist as far as Google is concerned. Search "what is Bigcoin" and Google auto-corrects to "what is Bitcoin." Voice assistants do the same. The project has real technology — decentralized token distribution, 21 million supply cap, halving events. None of it matters if you can't find it.

The fix is straightforward: people need to be talking about you in places that don't disappear overnight. Entity pages that rank for your project's name. Reference content that search engines crawl and AI systems cite. Content that compounds instead of scrolling away.

The easy way out is to spend your way through it — pay for PR. CoinDesk charges $15,000-50,000 for a sponsored article. You get a backlink and some SEO proof that you exist, but the traffic itself lasts about 48 hours. For early-stage builders, that's a lot of money for a receipt.

But each project is expected to solve this on their own — on top of building their product, remaining active on socials, managing a community, and shipping updates. Discoverability becomes another job stacked onto a team that's already stretched. There is no persistent reference layer for projects that can't afford to pay for one and no time to build one themselves.

That's what we built for the Abstract blockchain ecosystem.

What We Built

EurekaNews.xyz is a discovery platform for Abstract — an L2 on Ethereum, less than a year old, with real builders shipping real products into channels the broader internet can't see. We announced the platform in October 2025 and have been building on it since.

Our pitch deck (PDF) covers the full architecture, the content flywheel, and the market opportunity. What it can't fully convey is how the pieces connect. Let me walk through that.

How It Started

It started as a news site — a place where anyone in the Abstract community could write about their project, and readers could find indexed, permanent articles instead of tweets. Two people can't cover an ecosystem alone, so we built a writer pipeline: public application, editorial review, author pages, auto-syndication to Twitter, Telegram, and Discord. Six contributors joined. Most people in crypto still prefer tweeting, but the writers who publish with us get permanent articles with real search reach.

The news site needed a glossary. Newcomers needed to look things up — what is Kona, who is Cygaar, what does Cambria do. So I built definition pages. At first they were flat — parent-child relationships. A project and its sub-projects.

Then I added market data and the pages wanted to do more. Then social feeds, and the connections between entities became more important than the definitions themselves. A project has a token. Sometimes an NFT collection. Founders involved in other projects. A parent organization. Infrastructure dependencies that go sideways, not just up and down.

The glossary became an entity graph. Five entity types — person, project, game, protocol, organization — connected to assets (tokens, NFTs), to each other, and to every article that mentions them. The news site became a platform because the ecosystem demanded more than articles.

How the Entity Graph Maps an Ecosystem

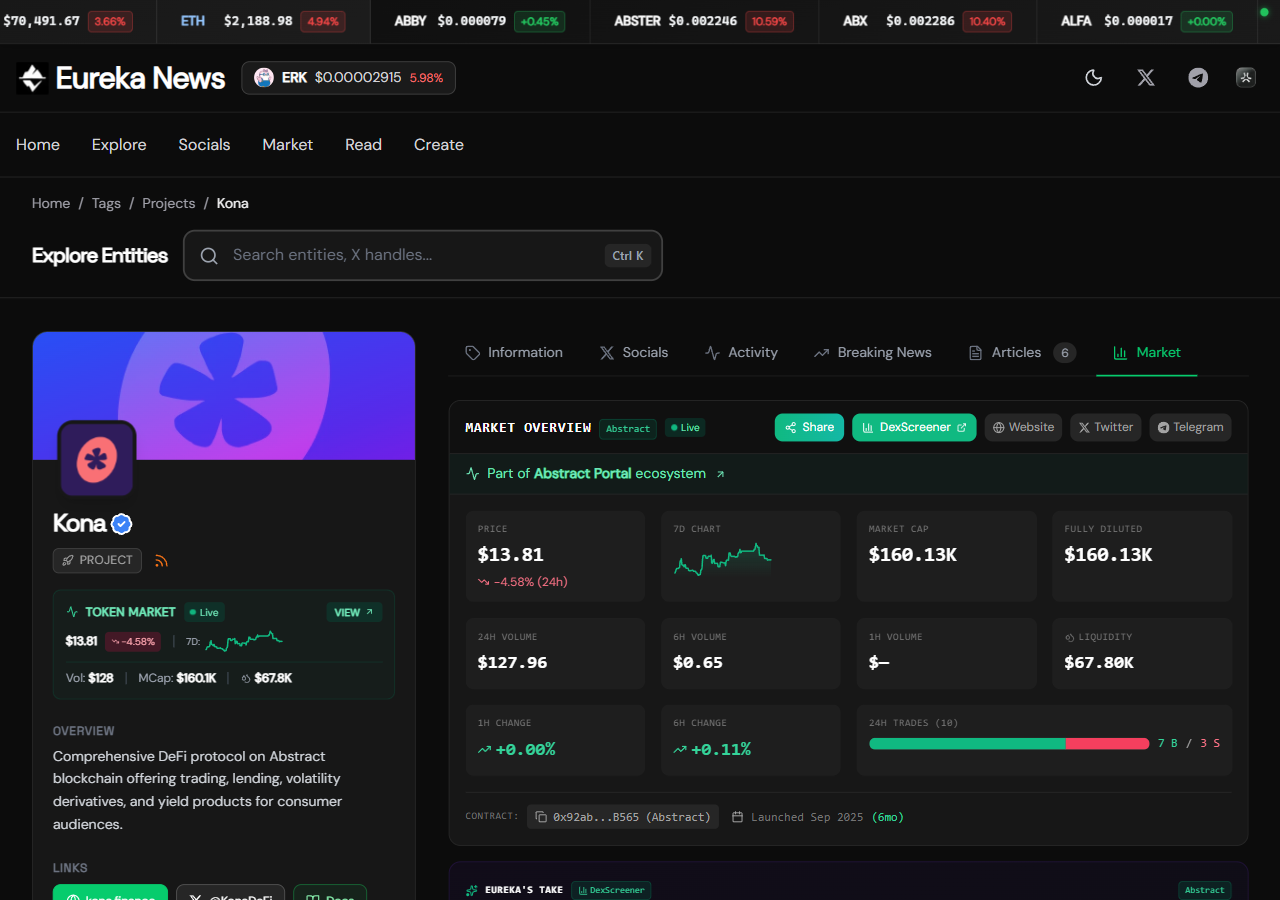

Take a single project — Kona, a DeFi protocol on Abstract:

The entity — Kona is classified as a project. Its page is home to its bio, official links, images, and basic info. It has a parent organization (KittyPunch), a token ($KONA), a memecoin launchpad (Froth.meme), and founders who are connected to other projects. These relationships are mapped in the graph. Visit the Kona page and you see all of them.

The social feed — We track Kona's Twitter account (and KittyPunch's, and their founders'). New tweets show up on Kona's entity page in real time. This is also our source of relationship data — when a tracked account mentions another entity, we see the connection forming. When the ecosystem shifts, the social layer reflects it first.

The market data — Kona's token price from DexScreener (aggregated across all DEX pools), NFT collection stats from OpenSea if applicable, correlated with Abstract's ecosystem-wide metrics from DeFi Llama — TVL, DEX volume, fees, protocol count. All on the entity page, updating continuously.

The articles and breaking news — Every article and breaking news piece is tagged to relevant entities. An article about KittyPunch surfaces on Kona's page too, because the graph knows the relationship. Writers don't have to manually cross-reference — the graph does it. Breaking news captures what's happening right now. Articles capture what happened and why it matters. Both feed the entity page. Both get indexed.

A newcomer researching Kona gets the full picture — what it is, how it relates to the ecosystem, what the market is doing, what the community is saying, what's been written about it — without joining a single Discord server. That page is indexed by Google. It compounds.

Multiply that across 100+ entities and you have a living map of an ecosystem.

How AI Uses the Graph

Each of these layers — entity bios, social feeds, market data, articles, breaking news — creates a trail. We score and store content for later use, track daily activity per entity, log recent breaking news, and rate social signals. AI doesn't operate in a vacuum here. It reads from what the system already knows — the entity's bio, its relationships, its recent activity, its market behavior.

That's what makes the automation work:

Breaking news — We monitor 70+ Twitter accounts. AI scores each tweet for newsworthiness. High-scoring tweets get drafted into breaking news with entity context pulled in — who's involved, what projects are connected, what the market has been doing. Queued for human review, published, tagged, indexed.

Market analysis — Every two hours, AI generates analysis per tracked asset. Because the graph connects Kona to KittyPunch, to the Litany product launch, to Abstract's TVL trend, the analysis is entity-aware:

"KONA trades at $14.47 on Abstract, showing mixed momentum with +1.23% 24h gains offset by -0.66% 1h decline — signaling potential reversal amid extreme fear conditions (Fear/Greed: 15). Despite bullish social sentiment around the imminent Litany product launch and $1M+ in Kona Lend deposits, the token underperforms Abstract's ecosystem TVL growth."

That's not a language model being clever. That's structured entity data making the output actually useful.

Social analysis — AI summarizes activity per tracked handle — sentiment, key topics, upcoming events, actionable takeaway.

Daily ecosystem recap — An overview of the entire ecosystem every day, synthesized from all of the above. What moved. What was announced. What matters.

Each AI process produces artifacts that other processes can reference. The breaking news from yesterday becomes context for today's market analysis. The social sentiment from this morning informs the daily recap. The system accumulates understanding.

Where We Are

Five months in. I used to spend hours a day on content management — writing breaking news, checking social feeds, updating market data, keeping entity pages current. Now I spend about five minutes a day. Rob Garner advising on SEO — 30+ years in search, ex-iCrossing/Hearst, author of Search and Social (Wiley). To his credit, Rob kept pushing me to envision what the content automation and agentic angle could become. That nudge shaped a lot of what the platform is today.

We're currently in discussions with the Abstract community team and other ecosystems about how to apply this elsewhere.

The discoverability problem is structural and chain-agnostic. We might be wrong about Abstract's timing specifically — ETH is down, market attention shifted to AI, the chain is young. But the entity graph and the content flywheel are the parts that transfer. The infrastructure compounds regardless of timing. Entity pages keep getting indexed. Domain authority grows. When we bring the platform to ecosystems in their growth phase, the foundation is already there.